Numerical Linear Algebra In Machine Learning¶

Contents¶

Introduction

Introduction To Function Approximation

Introduction To Regression

Linear Solvers For Least Squares Regression

Cholesky Factorization For Normal Equations

Singular Values Decomposition

Controlling For Overfitting With Regularization

Implementation in Scikit-learn

A Touch Of Recommendation Systems

Where To Go From Here

Introduction¶

In this blogpost we'll go over applications of numerical linear algebra in machine learning starting out with regression and ending with modern recommender systems! Numerical linear algebra (and numerical analysis more generally) was one of thoses courses that I learned, thought was boring and never wanted to study again. Only with maturity that comes with age (and a PhD) was I able to understand and appreciate the true power of numerical linear alebra. Infact understanding (distribued) linear algebra is probably one of the most important and useful tools I have ever learned. It has allowed me to contribute to open source libraries for scientific computing and understand how big data and machine learning systems work. The reason why numerical linear algebra is so important is because it allows us to approximate functions. In scientific computing and machine learning one is interested in how to approximate a function $f(x)$. Numerical analysis and statistics concerns itself with how good is our approximation to $f(x)$?

Traditionally algorithms in Numerical Linear Algebra are taught/learned in Matlab for easy learning, but written for production Fortran and C/C++ for high performance. The fields of Data Science, Machine Learning and Artifical Intelligence have a seen recent boom and given a new life and applications to these algorithms. These fields lean more to using Python (though R is often used as well) as the primaty programming language, but make heavy use of wrappers to BLAS and LAPACK libraries written in Fortran and C/C++.

Many Data Scientists and Machine Learning Researchers also heavily make use of Jupyter notebook as a tool for rapid prototyping and writing experiments in Python (though it also allows for the use of other languages likes R, Julia and Scala). Jupyter notebook is as an interactive envrionment for computing that runs in your web browser and is very similar to Mathematica's notebook. The fact it runs in a web browser is quite convenient as it will allows users to easily work on a remote computer without any complicated set up. See this post about how to get set up with Python & Jupyter notebooks on Google Cloud with all the dependencies installed for this post.

Introduction To Function Approximation¶

Let's now move onto function approximation. One learns in Calculus to represent smooth functions $f : \, \mathbb{R} -> \mathbb{R}$ about some point $x_0$ using special polynomials called power series or Taylor series:

\begin{equation} f(x) \, = \sum_{n=0}^{\infty} \, a_n (x - x_0)^{n} \end{equation}We can find the coefficients to the series by the equation,

\begin{equation} a_{n} \; = \; \frac{1}{n!} \, f^{n}(x_0) \end{equation}We observed that there is a radius of convergence $R \, \in \mathbb{R}$ such that within $(x_0 - R, x_0 + R)$ (one should test the end points too) the series converges to $f(x)$ uniformly and outside that it does not necessarily converge to $f(x)$.

We then learned that we can approximate our function $f(x)$ with the finite degree polynomial,

\begin{equation} f(x) \, \simeq \, f_N(x) \, = \, a_0 \, + \, a_1 (x - x_0) \, + \,a_2 (x - x_0)^2 \, + \ldots \, + \, a_N (x - x_0)^N \end{equation}We can then use that approximation to perform calculations on our function that might not have been possible otherwise. The most memorialble for me being learning how to integrate a Gaussian. Though in practice numerical integration is usually perferred for finite integrals.

Power series were originaly developed to approximate solutions to differential equations. As science and engineering progressed the differential equations become more complicated and harder to solve. Other approximation methods were invented like Fourier Series:

\begin{equation} f(x) \, \simeq \, f_N(x) \, = \, \frac{a_0}{2} \, + \sum_{k=1}^{N} \left(a_n \cos \left(\frac{2 \pi k x}{L} \right) + b_n \sin \left(\frac{2 \pi k x}{L} \right) \right) \end{equation}We remark that the functions $\cos\left(\frac{2 \pi k x}{L}\right)$ and $\sin\left(\frac{2 \pi k x}{L}\right)$ form an orthogonal basis for the Hilbert Space $L^{2}([-L,L])$. Coefficients for the approximations are determined projecting the function onto the basis functions:

\begin{equation} a_n \; = \; \frac{2}{L} \, \int_{-L}^{L} \, f(x) \,\cos \left(\frac{2 \pi n x}{L} \right) \, dx \end{equation}\begin{equation} b_n \; = \; \frac{2}{L} \, \int_{-L}^{L} \, f(x) \,\sin \left(\frac{2 \pi n x}{L} \right) \, dx \end{equation}The convergence of the Fourier series is much subtle issue. While the Taylor series was guaranteed to converge uniformly, the regularity conditions imposed to achieve this were quite strong. The class of functions in $L^{2}([-L,L])$ is quite broad and depending on the regularity conditions of our specific function we will get different modes of convergence.

Let's derive the second equation for $b_n$ by using a "projection" onto the basis function $\sin \left(\frac{2 \pi n x}{L} \right)$,

\begin{align} \int_{-L}^{L} \sin \left(\frac{2 \pi n x}{L} \right) \, \left[\frac{a_0}{2} \, + \sum_{k=1}^{N} \left(a_n \cos \left(\frac{2 \pi k x}{L} \right) + b_n \sin\left(\frac{2 \pi k x}{L} \right) \right) \right] \, dx \; &= \; \int_{-L}^{L} \sin \left(\frac{2 \pi n x}{L} \right) \, f(x) \, dx \\ b_n \, \int_{-L}^{L} \sin^2 \left(\frac{2 \pi n x}{L} \right)\, dx \; &= \; \int_{-L}^{L} \sin \left(\frac{2 \pi n x}{L} \right) \, f(x) \, dx \\ b_n \, \frac{L}{2} \; &= \; \int_{-L}^{L} \, f(x) \,\sin \left(\frac{2 \pi n x}{L} \right) \, dx \end{align}Which finally becomes,

\begin{equation} b_n \; = \; \left(\frac{L}{2} \right)^{-1} \int_{-L}^{L} \, f(x) \,\sin \left(\frac{2 \pi n x}{L} \right) \, dx \end{equation}The above example illustrates the two steps in our approximation methods:

Projecting the function (or its values) onto a finite dimensional space

Solving for the co-efficients corresponding to each of the basis functions

The last step is not usually as easy as the above example was and often requires solving a system of linear equations:

\begin{equation} A x \, = \, b \end{equation}The need to solve a system of linear equations occurs most often because the basis for our finite dimensional space is not orthogonal. If the basis were orthogonal, then the matrix would be diagonal and we could be invert it by hand, leading to equations like the Fourier coefficient equations.

Each of these steps in our approximations methods introduces their own errors:

The error in your model approximation.

The error in solving the linear system of equations.

The first error best summarized in the bias-variance trade off (see Section 2.2 in this book as well). By this I mean that by assuming that our function has only $p$ features we introduce a bias into our model and as we increase the number we will most likely increase the variance in our model. The second error is due to rounding errors and numerical stability. We won't touch on either of these topics too much, but mention them for completeness.

With the advent of the computer and seemingly cheap and unlimited computational resources numerical methods like finite difference and finite element methods were invented to approximate solutions to differential equations. The finite element method is one that is particularly dear to my heart and has concepts that have proved useful in understanding models in statistics and machine learning, particularly, Generalized Addative Models.

In the next section well go into the basics of Linear Regression models which is a subset of the larger class of Generalized Additive Models.

Introduction To Regression¶

Linear regression is generally the first statistical modeling technique one learns. It involves relating some target $y$ that is a continuous random variable to $p$ "features" represented as a vector: $\textbf{x}_{i} = [1, x_{1}, x_{2}, \ldots, x_{p}] \; \in \, \mathbb{R}^{p+1}$ using coefficients $\boldsymbol \omega = [\omega_0, \omega_1, \omega_2, \ldots, \omega_p] \; \in \, \mathbb{R}^{p+1}$. The coefficient $\omega_0$ is called the "intercept." The features can have continuous and non-negative integer values and are generally considered to be non-random. The relationship between the target values and features values is described by the model,

\begin{equation} y_{i} \; =\; \boldsymbol \omega^{T} \textbf{x}_{i} + \epsilon_{i} \end{equation}Where $\epsilon_{i} \, \sim \, N(0,1)$ are independent and normal distributed with mean 0 and variance 1 and $\boldsymbol \omega \, \in \mathbb{R}^{p+1}$ are the unknown co-efficients. The fitting the linear regression model then becomes,

\begin{equation} h_{\boldsymbol \omega}( \textbf{x}_{i}) \; =\; \boldsymbol \omega^{T} \textbf{x}_{i} \end{equation}Fitting the model then becomes finding the cofficients $\boldsymbol \omega$ that best approximates $y_i \; = \; h_{\boldsymbol \omega}( \textbf{x}_{i})$ for $i=1, \ldots, n$. This model is often called ordinary least squares and converges in probability.

In general we will have many distinct measurements $\textbf{x}_{i}$ corresponding to values $y_i$ that are collected into a training set, $D_{\text{Train}} \, = \, \left\{ (\textbf{x}_{i}, \, y_{i}) \right\}_{i=1}^{n}$. This can be represend in the form $D_{\text{Train}} \, = \, (\textbf{X}_{\text{Train}}, \textbf{y}_{\text{Train}}$), where $\textbf{X}_{\text{Train}} \in \mathbb{R}^{n \times (p+1)}$ is the Design Matrix and $\textbf{y}_{\text{Train}} \in \mathbb{R}^{n}$ is the vector corresponding of target values. We also have a test set, $D_{\text{Test}} \, = \, \left\{ (\textbf{x}_{i}, \, y_{i}) \right\}_{i=1}^{m}$. This can be represend in the form $D_{\text{Test}} \, = \, (\textbf{X}, \textbf{y}$), where $\textbf{X}_{\text{Test}} \in \mathbb{R}^{m \times (p+1)}$ and $\textbf{y}_{\text{Test}} \in \mathbb{R}^{m}$ is the vector corresponding of target values. The training set is the set of points we use to determine $\boldsymbol \omega$ and the testing evaluating the performance of our model. For ease of notation we will simply drop the +1 from the $p+1$, but the reader should always remember there is an intectept. Let's make things concrete using some example data.

The data we will use (data.csv) comes from the NYC Mayor's Office of Sustainbility and contains energy efficiency measurements of multi-family housing units in New York City. We will use some of the measurements to predict the Green House Gas Emissions of the buildings, you can read more how this data was cleaned here. The file has the following columns:

NGI: Nautral Gas Use Intensity (kBtu/ft 2 )EI: Electricty Use Intensity (kBtu/ft 2 )WI: Water Use Intensity (kga/ft 2 )Site_EUI: Site Energy Usage Intensity (kBtu/ft 2 )GHGI: Green House Gas Emissions Intensity (Metric Tons CO2e / ft 2 )

We can import the data using Pandas which gives us a fast way to read in data from csv documents:

import pandas as pd

#columns we care about

cols = ['NGI','EI','WI','Site_EUI','GHGI']

# read the data

df = pd.read_csv("data.csv",

usecols = cols)

#view the first 5 lines

df.head()

We split out dataframe into a training and test set:

df_test = df.sample(frac=0.25, random_state=42)

df_train = df.drop(df_test.index, axis=0)

Then our target variable then is the GHGI and design matrix becomes the rest of the columns:

import numpy as np

# Design matrix

X = df_train[['NGI','EI','WI','Site_EUI']].values

# Target vector

y = df_train[["GHGI"]].values[:,0]

# Design matrix

X_test = df_test[['NGI','EI','WI','Site_EUI']].values

# Target vector

y_test = df_test[["GHGI"]].values[:,0]

print("X = \n", X)

print()

print("X.shape = ", X.shape)

print()

print("y = \n", y)

print()

print("X_test.shape = ", X_test.shape)

$X$ and $y$ are now a NumPy matrix and array respectively and the same for $X_{\text{test}}$ and $y_{\text{test}}$. NumPy is Python library that has highly optimized data structures and algorithms for linear algebra. Infact, Pandas is written on top of NumPy.

We can then look at the relationship or correlation each of the features has on the target variables by looking at their scatter plots using a function I wrote:

from plotting import plot_results

%matplotlib inline

plot_results(df_test)

These scatter plots show us the correlation between GHGI and the different features. The far panel on the right shows us that as we increase Site_EUI on average we see GHGI increase linearly. This makes sense as the more energy a building uses the more green house gas it produces. The same is true of natural gas (NGI) as it is used for heating or cooking. Therefore if this goes up so should our green house gas emissions. Less intuitive may be electricity usage. We might not expect that as our electicity usage increases our green house gases increases, however, this could be due to the fact the source of the electricity comes from gas or coal. That fact this is less intuitive could also be why the shape is less linear than Site_EUI. The amount of water we use shoul not effect the green house gas emissions (maybe for heating hot water). This realiztion is displayed in the scater plot for WI as it does not show any distinct pattern.

Let's now return to the linear regression. Our goal is to approximate the target variable $y_i$ with a function $h_{\boldsymbol \omega}(\textbf{x}_{i})$. This function is a linear combination of our features,

\begin{equation} h_{\boldsymbol \omega}(\textbf{x}_{i}) \, = \, \boldsymbol \omega^{T} \textbf{x}_{i} \end{equation}We find the solution vector or coefficients $\boldsymbol \omega$ by minimizing the cost function $J \left( \boldsymbol \omega \right)$,

\begin{align} \hat{\boldsymbol \omega} \; &= \; \min_{\boldsymbol \omega} \, \frac{1}{2} \sum_{i=1}^{n} \left( y_{i} -h_{\boldsymbol \omega}( \textbf{x}_{i}) \right)^{2} \\ &= \; \min_{\boldsymbol \omega} \, \frac{1}{2} \Vert \textbf{y} - h_{\boldsymbol \omega}(\textbf{x}) \Vert^{2} \\ &= \; \min_{\boldsymbol \omega} \, J \left( \boldsymbol \omega \right) \end{align}Mininmizing $J \left( \boldsymbol \omega \right)$ is equivalent to setting the $\nabla J \left( \boldsymbol \omega \right) \; = \; 0$. We can expand the cost function,

\begin{align} J(\boldsymbol \omega ) \; &= \; \frac{1}{2} \, \Vert \textbf{y} - \textbf{X} \boldsymbol \omega \Vert^{2} \\ &= \; \min_{\boldsymbol \theta} \, \frac{1}{2} \Vert \textbf{y} - \textbf{X} \boldsymbol \omega \Vert^{2} \\ &= \; \frac{1}{2} \, \left( \textbf{y} - \textbf{X} \boldsymbol \omega \right)^{T} \left( \textbf{y} - \textbf{X} \boldsymbol \omega \right) \\ &= \; \frac{1}{2} \, \left( \textbf{y}^{T} \textbf{y} - \textbf{y}^{T} \textbf{X} \boldsymbol \omega - \boldsymbol \omega^{T} \textbf{X}^{T} \textbf{y} + \boldsymbol \omega^{T} \textbf{X}^{T} \textbf{X} \boldsymbol \omega \right) \end{align}Taking the gradient of both sides we then have (for a review on matrix calculus see here),

\begin{equation} \boldsymbol \nabla J(\boldsymbol \omega ) \; = \; \textbf{X}^{T} \left( \textbf{y} - \textbf{X} \boldsymbol \omega \right) \end{equation}Setting the above equal to zero yields the linear system of equations,

\begin{equation} \left(\textbf{X}^{T}\textbf{X}\right) \, \hat{\boldsymbol \omega} \; = \; \textbf{X}^{T} \textbf{y} \end{equation}The above formulation is the so-called "Normal Equations". We can rewrite them as,

\begin{equation} S \, \hat{\boldsymbol \omega} \; = \; \textbf{b} \end{equation}Where $\textbf{b} \, \in \mathbb{R}^{p}$ and $S \, = \, \textbf{X}^{T}\textbf{X} \, \in \mathbb{R}^{p \times p}$. When the features have been scaled (as we have assumed), the matrix $S$ is the covariance matrix

\begin{equation} \left(\textbf{X}^{T}\textbf{X}\right)_{i,j} \; = \; \text{Cov}(x_{i},x_{j} ) \end{equation}The vector $b \, = \, \textbf{X}^{T} \textbf{y} $ is the projection of the target values onto the finite dimensional space that is spanned by the features. Defining the residual r as,

\begin{equation} \textbf{r} \; = \; \textbf{y} - \textbf{X} \boldsymbol \omega \end{equation}Then we note $\nabla J \left( \hat{\boldsymbol \omega} \right) \; = \; 0$ is the same thing as saying,

\begin{equation} X^{T} \, \textbf{r} \; = \; 0 \end{equation}Or the solution $\hat{\boldsymbol \omega}$ is value such that the residual is orthogonal to our feature space or the residual is statistically uncorrelated with any of the features.

The covariance matrix is symmetric and positive definite, but if the two features are highly correlated then two rows in the matrix will be nearly identical and the matrix will be nearly singular. Statistically, having correlated features is bad since it makes it hard to determine the impact on any one feature has on the target. Numerically, having correlated features is bad because the condition number of the covariance matrix explodes. The reason is that the condition number is definted as,

\begin{equation} \kappa(S) \; = \; \Vert S \Vert_2 \, \Vert S^{-1} \Vert_2 \; = \; \frac{\sigma_{\text{max}}}{\sigma_{\text{min}}} \end{equation}Where $\sigma$ are the singular values from the singular value decomposition. As the matrix $S$ becomes more singular $\Vert S^{-1} \Vert_2 \, \rightarrow \, \infty$, forcing the $\kappa \, \rightarrow \, \infty$. We remark that the condition number affects not only the accuracy of your solution, but also the stability of the solvers, see Lecture 12.

There are two we will go over about solving the over-determined system of equations $X \boldsymbol \omega \, = \, \textbf{y}$:

Solving the Normal Equations using the Cholesky Decomposition

Solving the linear system using the Singular Value Decomposition

However, one can also solve the Normal Equations using an LU factorization or the original system with the QR factorization.

Linear Solvers For Least Squares Regression¶

See Lecture 11 for more detailed discussions.

Linear Solver 1: Cholesky Decomposition Of Normal Equations¶

Let's first solve the Normal Equations:

\begin{equation} S \, \boldsymbol \omega \; = \; X^{T} \textbf{y} \end{equation}Our covariance matrix $S$ is symmetrix positive definite we can use the Cholesky decomposition:

\begin{equation} S = L L^{T} \end{equation}where $L$ is an lower triangular matrix. To solve the system from the nomrmal equations then becomes,

\begin{align} S \, \boldsymbol \omega \; &= \; X^{T} \textbf{y} \\ L L^{T} \, \boldsymbol \omega \; &= \; X^{T} \textbf{y} \end{align}Which we can rewrite as first solving,

\begin{equation} L \textbf{z} \; = \; X^{T} \textbf{y} \end{equation}Then solve,

\begin{equation} L^T \boldsymbol \omega \; = \; \textbf{z} \end{equation}As $L$ is an lower triangular matrix each of these linear system of equations is simple to solve and the forward/backward substitutions made to solve them are backwards stable.

Let's try this out by first analyzing the covariance matrix

# Build the covariance matrix

X_t = X.transpose()

S = X_t.dot(X)

print("S = \n", S)

Let's take a look at the eigen values to understand the condititioning of our matrix

eigs = np.linalg.eigvals(S)

print(eigs)

cond_num = np.sqrt( eigs.max() / eigs.min())

print(f"Condition number = {cond_num}")

The eigenvalues are all positive and the condition number are not too big which is good!

We will now be using many SciPy function calls. SciPy is a sister Python library with Numpy and supports many scientific algorithms. SciPy's linear algebra routines expand on NumPy's and even allow for use sparse matrices and vectors. SciPy acts a wrappers around LAPACK, indeed the eigenvalue calculator directly calls LAPACK's eigenvalue decomposition.

Let's now perform the Cholesky Decomposition using SciPy:

from scipy import linalg

# Perform the Cholesky Factsaorization

L = linalg.cholesky(S, lower=True)

L

We can check to make sure that $L L^T \, = \, S$:

L.dot(L.T)

Then use the matrix $L$ to solve the normal equations using SciPy's solve_triangular (which solves a lower/upper linear system). This function directly calls LAPACK's implementation of forward/backward substitution:

# solve for the coefficents

z = linalg.solve_triangular(L, X_t.dot(y), lower=True)

omega = linalg.solve_triangular(L.T, z, lower=False)

print(f"omega : {omega}")

We can now get the predicted values from $\hat{y} \, = \, X \hat{\boldsymbol \omega}$ on the test set and plot the results against the true values y_test:

# Get the in sample predicted values

df_test["y_ols"] = X_test.dot(omega)

plot_results(df_test, 'y_ols')

Not bad! Let's move onto solving the system of equations with the singular value decomposition. I should note SciPy implements a Cholesky solver that directly calls LAPACK's routine.

Linear Solver 2: The Singular Value Decomposition (SVD)¶

Let's solve the overdetermined system of equations,

\begin{equation} X \, \boldsymbol \omega \; = \; y \end{equation}using the Singular Value Decomposition (SVD):

\begin{equation} X \; = \; U \Sigma V^{T} \end{equation}Where $U \, \in \mathbb{R}^{n \times n}$ and $V \, \in \mathbb{R}^{p \times p}$ are unitary matrices and $\Sigma \, \in \mathbb{R}^{n \times p}$ is a diagonal matrix with the singular values along the diagonal. We can substitute in the SVD into our system of equations to obtain,

\begin{align} X \, \boldsymbol \omega \; &= \; y \\ U \Sigma V^{T} \boldsymbol \omega \; &= \; y \\ U^{T} U \Sigma V^{T} \boldsymbol \omega \; &= \; U^{T} \, y \\ \Sigma V^{T} \boldsymbol \omega \; &= \; U^{T} \, y \end{align}We then transform this to a system of equations:

\begin{equation} \Sigma \, \textbf{z} \; = \; U^{T} \, y \end{equation}and

\begin{equation} V^{T} \boldsymbol \omega \; = \; \textbf{z} \end{equation}The last system of equation can be transformed using the fact $V$ is unitary ($VV^{T} \, = \, I$):

\begin{equation} \boldsymbol \omega \; = \; V \textbf{z} \end{equation}We can then solve the original system of equations $X \, \boldsymbol \omega \; = \; y$ by

Finding the SVD of the Design Matrix $X \; = \; U \Sigma V^{T}$ then solve,

Inverting the diagonal matrix to solve $ \textbf{z} \; = \Sigma^{-1} \; U^{T} \, y$

Perform the matrix-vector multiplication: $\boldsymbol \omega \; = \; V \textbf{z}$

We can perform the SVD using SciPy (which to no surprise calls LAPACK's routine):

U, Sig, Vt = linalg.svd(X, full_matrices=False)

We can then look at the shapes of each of the resulting matrices:

U.shape, Sig.shape, Vt.shape

And then perform the steps described above to find $\boldsymbol \omega$,

z = (1 / Sig) * U.transpose().dot(y)

omega = Vt.transpose().dot(z)

print(f"omega : {omega}")

The solution via Cholesky Factorization and the SVD have the same solutions!

One very nice thing about linear regression is that it is very easy to interpret. In linear regression, the coefficients tell us how much an increase in one unit of the feature increases the target variable by one unit. Therefore increases in the sites energy usage intensity (Site_EUI) increase the GHGI the most and intuitively this makes sense. However, the model is also telling us that the increases in natural gas usage (NGI) decrease the GHGI which doesn't make sense. This most likely happened because the features are correlated (see off diagonal values in covariance matrix $S$) or because the model was over-fitting, i.e. giving to much weight to a specific feature.

Controling For Overfitting With Regularization¶

Often times a linear regression will give us good results on the training set, but on very bad results on another independent (test) dataset. This is an example of overfitting our model. We can control for overfitting our model by introducting regularization of the form,

\begin{align} J(\boldsymbol \omega ) \; = \; \frac{1}{2n} \sum_{i=1}^{n} \left( y_{i} - h_{\boldsymbol \omega}(\textbf{x}_{i}) \right)^{2} + \frac{\lambda}{q} \sum_{i=1}^{p} \vert \omega_{i} \vert^{q} \end{align}where $\lambda \, \geq \, 0$. For $q=2$ this is called Ridge Regression and for $q=1$ it is called Lasso Regression). Ridge Regression serves to control for over-fitting by shrinking the parameters $\omega_{i}$ as $\lambda$ increases. Not all individual parameters must shrink as $\lambda$ increases, but in aggregate $\Vert \boldsymbol \omega \Vert^{2}_{\ell_2}$ decreases. We note that both methods seek to reduce the variance in model, and subsequently increase the bias.

Remark: In neither method do we consider penalizing the value $\omega_0$.

In the case of Ridge Regression we can view the cost function as,

\begin{equation} \hat{\boldsymbol \omega} \; = \; \min_{\boldsymbol \omega} \Vert \textbf{y} - \textbf{X} \boldsymbol \omega \Vert^{2}_{2} + \lambda \Vert \boldsymbol \omega \Vert^{2}_{2} \end{equation}Which after expanding the values and setting the derivative to zero yields,

\begin{equation} \hat{\boldsymbol \omega} \; = \; \left(\textbf{X}^{T} \textbf{X} + \lambda I \right)^{-1} \left(\textbf{X}^{T} \textbf{y} \right) \end{equation}We can see that the regularization term acts to remove the singularity from the covariance matrix if there is correlation among features. Using the SVD we arrive at:

\begin{align} \left(\textbf{X}^{T}\textbf{X} + \lambda I \right) \, \boldsymbol \omega \; &= \; \left(\textbf{X}^{T} \textbf{y} \right) \\ \left( V \Sigma^{T} \Sigma V^{T} + \lambda I \right) \, \boldsymbol \omega \; &= \; \left(V \Sigma^{T} U^{T} \right) \, \textbf{y} \\ V \left( \Sigma^{T} \Sigma + \lambda I \right) V^{T} \, \boldsymbol \omega \; &= \; \left(V \Sigma^{T} U^{T} \right) \, \textbf{y} \\ \left( \Sigma^{T} \Sigma + \lambda I \right) V^{T} \, \boldsymbol \omega \; &= \; \Sigma^{T} U \, \textbf{y} \end{align}We can transform the last equation to the system of equations:

\begin{equation} \left( \Sigma^{T} \Sigma + \lambda I \right) \textbf{z} \; = \; \Sigma^{T} U^{T} \, \textbf{y} \end{equation}with the system corresponding equation $V^{T} \, \hat{\boldsymbol \omega} \; = \; \textbf{z}$ which can be rewritten using the unitary property of $V$ as the matrix-vector multiplication:

\begin{equation} \hat{\boldsymbol \omega}\; = \; V \textbf{z} \end{equation}The matrix, $\left( \Sigma^{T} \Sigma + \lambda I \right) \, \in \mathbb{R}^{p \times p}$ is diagonal and can be inverted by hand. This gives us a way of solving the problem for Ridge Regression for a given $\lambda$:

Finding the SVD $X \; = \; U \Sigma V^{T}$ then solve

"Invert" the matrix to obtain $ \textbf{z} \; = \; \left( \Sigma^{T} \Sigma + \lambda I \right)^{-1} \, \Sigma^{T} \, U^{T} \, \textbf{y}$

Perform the matrix-vector multiplication $\hat{\boldsymbol \omega} \; = \; V \textbf{z}$

We remark we can combine the 2. and 3. to obtain the formula:

\begin{equation} \hat{\boldsymbol \omega} \; = V \, \left( \Sigma^{T} \Sigma + \lambda I \right)^{-1} \, \Sigma^{T} \, U^{T} \, \textbf{y} \end{equation}And then our predictions $\hat{y} \; = \; X \, \hat{\omega}$ become:

\begin{align} \hat{y} \; &= \; X \, \hat{\omega} \\ &= \; U \, \Sigma \, \left( \Sigma^{T} \Sigma + \lambda I \right)^{-1} \, \Sigma^{T} \, U^{T} \, \textbf{y} \\ &= \; U \, D \, U^{T} \, \textbf{y} \end{align}As you may have learned the matrix $U$ is unitary so it acts a change of basis transformation and the matrix $D \, = \, \Sigma \, \left( \Sigma^{T} \Sigma + \lambda I \right)^{-1} \, \Sigma^{T} \, \in \mathbb{R}^{n \times n}$ is a diagonal matrix that either shrinks or elongates along this new basis as shown below:

| From https://upload.wikimedia.org/wikipedia/commons/b/bb/Singular-Value-Decomposition.svg |

The values along the diagonal of $D$ take the form,

\begin{equation} D_{i,i} \; = \; \frac{\sigma_{i}^{2}}{\sigma_{i}^{2} + \lambda} \end{equation}From this formulation $D$ acts to shrink the influence of low frequency singular vectors ($\sigma$ small) more than it does high frequency singular vector ($\sigma$ large)!

Let's see how our code will look for solving the Ridge Regression problem using the SVD.

# using function annotations in Python 3

# (see, https://www.python.org/dev/peps/pep-3107/)

import scipy as sp

def ridge_svd(

X : np.ndarray,

y : np.ndarray,

reg_param : float = 1.0

) -> np.ndarray:

"""

Solves the Ridge Regression problem using the SVD.

Params:

-------

X : The matrix of features

Y : The target vector

reg_param : The regularization parameter

Returns:

--------

omega : The array of coefficent for the solution

"""

# compute the SVD

U, Sig, Vt = sp.linalg.svd(X, full_matrices=False)

# for Sigma^{T} Sigma + \lambda (this is an array)

Sig_plus_reg = Sig * Sig + reg_param

# find z by "inverting" the matrix and then get omega

z = (1.0 / Sig_plus_reg) * Sig * U.T.dot(y)

omega = Vt.T.dot(z)

return omega

Let's test this with $\lambda \, = \, 0$ which should give us the least squares regression solution:

print("omega: ", ridge_svd(X, y, 0.0))

It does! Let's try adding a little regularization and see what happens.

omega = ridge_svd(X, y, 0.1)

print(f"omega: {omega}")

We can see that adding regulaization shrank the last feature, i.e. (Site_EUI) and made turned the coefficient for NGI positive which makes sense! Let's take a look at the results:

df_test["y_ridge"] = X_test.dot(omega)

plot_results(df_test, 'y_ols', 'y_ridge')

It's hard to say whether least squares or ridge regression is better from these figures, but the coefficients for Ridge Regression make much more sense. Notice that I have left out how to find the regularization parameter $\lambda$. That topic is out of scope for this post. However, for reference, the best value for $\lambda$ is often chosen via cross-validation, see these slides for more information.

Implementation In Scikit-Learn¶

Scikit-Learn is the industry standard open source machine leanring library in Python. As it is open source we can easily see how the developers wrote their linear sovlers by going to the library's GitHub.

For regression, Scikit-learn mostly acts as a wrapper around Scipy which act as a wrapper to BLAS/LAPACK. The regular linear regression Scikit-Learn implementation utilizes Scipy's least square solver, which if the matrix is dense calls BLAS/LAPACK. If the matrix $X$ is sparse then it calls SciPy's sparse least squares solver which uses least squres with QR factorization LSQR. Note that while $X \, \in \mathbb{R}^{n \times p}$ may be a large sparse matrix, the covariance matrix $S \, \in \mathbb{R}^{p \times p}$ is typically quite small and dense.

For ridge regression, unless the user specifices the linear sovler to use then if the intercept is needed Scikit-learn solve the linear system with the Stochastic Average Gradient method, otherwise it uses the Cholesky Decomposition for dense matrices and Conjugate Gradient method for sparse matrices. Although, all the algorithms are written for dense or sparse matrices. The congugate gradient method makes use of SciPy's Sparse CG function which does not need to explicitly form the matrix, but rather only needs its action on a vector making it extremely efficient for sparse matrices. If any of these methods fail, the least square QR solver is used.

A Touch Of Recommendation Systems¶

Recommendation systems have become very popular and are one of the best examples of Machine Learning that we interact with daily, i.e. Amazon and Netflix. One popular technique for making recommendations is called Alternating Least Squares.

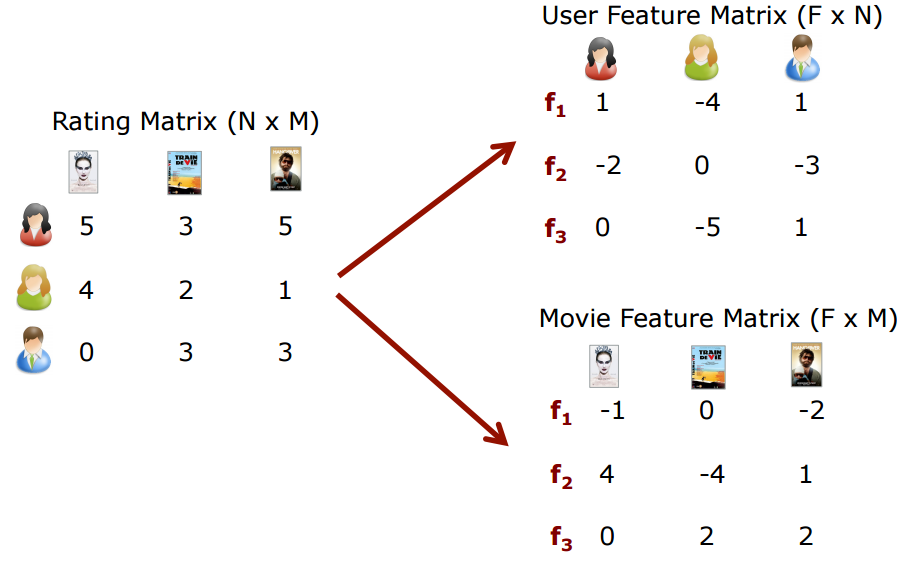

The idea is that we have some table of ratings of items by users as shown below:

|

|---|

| From https://recsysjd.wordpress.com/2016/09/10/matrix-factorization-techniques-for-recommender-systems |

However, not all users will have rated all items and we want to predict how a user may feel about an item they have not rated. This is how recomendations happen, through the prediction of how a user might rate an item they have not seen before!

Let's for example take the idea of recommending movies to users of a webservice. The idea is we want to say a given user has some preference on a movie by the perfence of the generes that make up the movie. For simplicity let's say movies are a combination of the genres (so-called latent factors in machine learning):

- Horror

- Action

- Comedy

Then we can write a user's preference as well a movie as a linear combinatin of these genres:

\begin{align} \text{Sally} \; &= \; 1 \times \text{Horror} + 2 \times \text{Action} - 0.2 \times \text{Comedy} \\ \ \text{Weekend At Bernies} \; &= \; 0 \times \text{Horror} + 1 \times \text{Action} + 5 \times \text{Comedy} \\ \text{Sally's Rating of Weekend At Bernies} \; &= \; 1 \times 0 + 2 \times 1 - 0.2 \times 5 \\ &= \; 1 \, \text{Star} \end{align}The above shows how Sally would rate "Weekend at Bernies" given that she likes films involving Horror and Action, but dislikes action films and that "Weekend at Bernies" is a little bit of action and a lot of comedy. If we label $R_{u,i}$ as user $u$'s rating of movie $i$, then the inner product of the vector of movie coefficients $m_{i}$ and user preferences $p_{u}$ is $R_{u,i}$ :

\begin{equation} R_{u,i} \; = \; \textbf{m}_{i}^{T} \textbf{p}_{u} \end{equation}We can then factorize the review matrix $R$ into a product of movies $M$ and users $P$ as depicted in the diagram above,

\begin{equation} R \; \simeq \; M^{T} P \end{equation}If $R \in \mathbb{R}^{n \times k}$ and we have $f$ latent factors that make a movie then the movie genres are $G \in \mathbb{R}^{f \times n}$ and user preferences are $P \in \mathbb{R}^{f \times k}$. How do we find the coefficient for each user and each movies, i.e. how do we find the matrices $M$ and $P$?

The idea is we can set up a cost function almost like Ridge Regression and minimize with respect to $M$ and $P$:

\begin{equation} \min_{M,P} \, J(M,P) \; = \; \frac{1}{2} \Vert R - M^{T} P \Vert^{2}_{F} + \frac{\lambda}{2} \left( \Vert M \Vert^{2}_{F} + \Vert P \Vert^{2}_{F} \right) \ \end{equation}Where $\Vert \cdot \Vert_{F}$ is the Frobenius Norm. How do we minimize for both $M$ and $P$? Well one way is to:

Randomly initialized $M$ and $P$ then

Fix $M$ and solve for $P$ such that $\nabla_{P} J(M,P) \; = \; 0$

Fix $P$ and solve for $M$ such that $\nabla_{M} J(M,P) \; = \; 0$

Repeat steps 1 and 2 until convergence or max iterations reached

At each stage in 1. and 2. you are updating the choice and $M$ and $P$ by essentially solving a Least Squares Ridge Regression problem for $P$ and $M$. The alternating nature of this iterative algorithm gives rise to its name Alternating Least Squares! Some links to good references for Alternating Least Squares below.